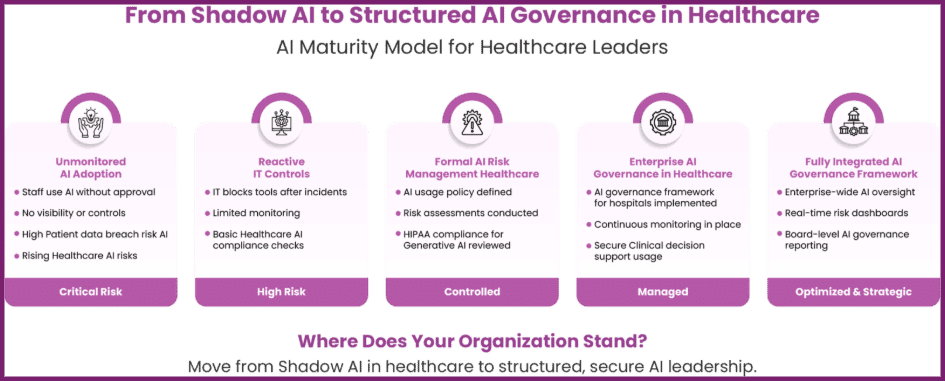

Healthcare leaders across global markets face a new governance challenge: Shadow AI in healthcare. Clinicians, administrators, researchers, and even vendors now access powerful AI tools without formal approval, oversight, or security validation. While innovation accelerates, unmanaged adoption exposes organisations to significant healthcare AI risks, regulatory scrutiny, and rising patient data breach risk AI scenarios.

Various studies show that healthcare continues to face the highest average breach cost of any sector, reaching $10.93 million per incident. Enterprise audit trends reports published online highlight a sharp rise in unauthorised AI tools within corporate environments, including healthcare delivery networks.

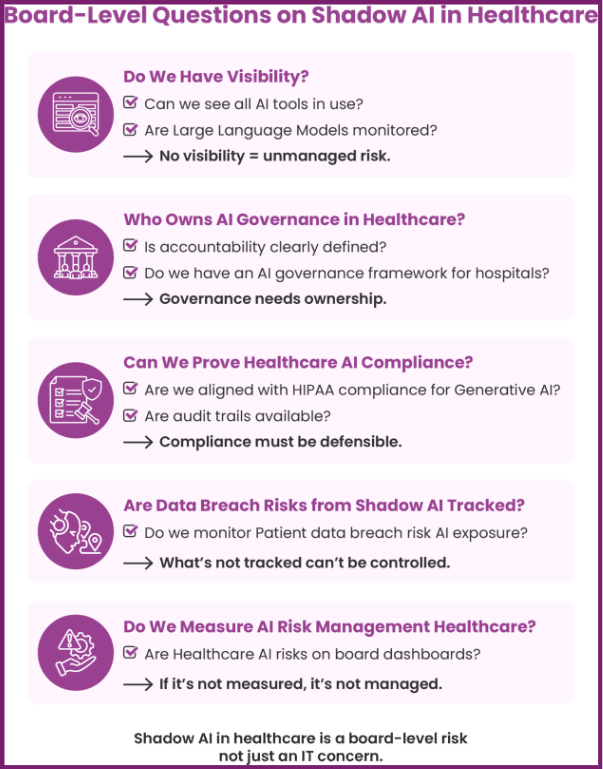

For CIOs, CISOs, CMIOs, and compliance leaders, the issue no longer revolves around banning tools. It revolves around structured AI risk management healthcare strategies that protect innovation while strengthening governance.

Understanding Shadow AI in Healthcare and the Expanding Risk Surface

Shadow AI in healthcare refers to the use of artificial intelligence tools, including public Large Language Models, without centralised approval or governance. Staff often experiment with generative AI for documentation, coding assistance, clinical research summaries, or patient communication drafting. Leaders often discover these tools only after a security review or compliance incident.

Unlike traditional shadow IT, AI tools interact directly with patient data, clinical notes, diagnostic summaries, and operational datasets. That interaction creates immediate healthcare AI risks tied to confidentiality, integrity, and data sovereignty.

Hospitals operate in high-pressure environments. Clinicians seek faster documentation workflows. Administrators aim to reduce billing delays. Researchers want accelerated literature reviews. These productivity demands encourage rapid experimentation, often without alignment to AI governance in healthcare principles.

Without a structured AI governance framework for hospitals, organisations struggle to identify which AI tools staff use, how they process data, and where that data resides. This visibility gap amplifies both operational and compliance exposure.

Healthcare AI Risks: Security, Clinical, and Financial Exposure

Data Security and Patient Data Breach Risk AI

When staff upload clinical summaries into external AI platforms, they may unknowingly transmit protected health information. That behaviour elevates patient data breach risk AI, especially if vendors store or train models on that data.

Cybersecurity firms such as Cyera and Securiti report that organisations frequently underestimate data breach risks from shadow AI because AI tools bypass traditional endpoint monitoring systems. Unauthorised API integrations and browser-based tools further complicate detection.

Healthcare leaders must treat healthcare AI risks as enterprise-level threats, not isolated user mistakes. Each unapproved AI interaction can expand the attack surface and increase the probability of regulatory investigation.

Regulatory and Healthcare AI Compliance Challenges

Regulators increasingly scrutinise AI usage in healthcare. Organisations must evaluate healthcare AI compliance obligations under HIPAA, GDPR, and emerging AI regulations.

Many executives ask whether public generative platforms meet HIPAA compliance for generative AI standards. Most public tools do not automatically guarantee compliance unless organisations sign specific business associate agreements and implement strict controls.

Without clear policies, teams cannot demonstrate AI risk management healthcare maturity during audits. Regulators expect documented oversight, risk assessments, and governance structures. Informal experimentation undermines defensibility.

Also Read – Ensuring Compliance With Healthcare Compliance Solutions – Ezovion.

Clinical Decision Support and Safety Risks

AI tools increasingly influence Clinical decision support workflows. Clinicians may rely on outputs from Large Language Models to summarise research, draft differential diagnoses, or interpret guidelines.

However, generative models can produce hallucinated or biased outputs. If clinicians rely on unvalidated outputs, organisations expose themselves to malpractice liability and heightened Healthcare AI risks. A 2023 JAMA study on generative AI responses in clinical scenarios showed that AI-generated medical advice occasionally included unsafe recommendations.

Hospitals must integrate AI governance in healthcare policies that clearly define how teams can use AI in clinical decision support contexts. Leaders must also evaluate model performance and bias testing as part of their AI governance framework for hospitals.

Why Traditional Governance Fails in Shadow AI in Healthcare

Legacy IT governance models focus on infrastructure, software licensing, and vendor contracts. They rarely address autonomous AI tools accessed through browsers or APIs. As a result, shadow AI in healthcare expands beyond visibility.

Most organisations lack continuous AI discovery capabilities. Security teams may detect malware but miss routine interactions with external AI platforms. This oversight gap increases data breach risks from shadow AI and weakens healthcare AI compliance posture.

In addition, fragmented accountability slows response. IT teams focus on security. Compliance teams focus on regulations. Clinical leaders focus on patient outcomes. Without a unified AI governance in healthcare approach, organisations cannot enforce consistent policies.

Building AI Governance in Healthcare: Strategic Frameworks for Enterprises

Healthcare leaders must move from reactive containment to structured enablement. A formal AI governance framework for hospitals anchors this transformation.

• Establish Enterprise-Level AI Oversight

Executive teams should create cross-functional AI governance councils that include IT, compliance, legal, clinical leadership, and cybersecurity. This council must define risk tiers, approve use cases, and oversee AI risk management healthcare programs.

Clear governance reduces healthcare AI risks and strengthens executive accountability. Leaders should align governance policies with enterprise risk registers and board-level reporting structures.

• Develop Clear Usage Policies and HIPAA Compliance for Generative AI

Organisations must articulate explicit rules regarding HIPAA compliance for generative AI tools. Policies should clarify approved platforms, restricted data types, and escalation pathways.

Transparent policies directly reduce patient data breach risk AI exposure. They also demonstrate proactive healthcare AI compliance to regulators.

• Implement Continuous Monitoring and Risk Assessment

Continuous discovery tools help identify unauthorised AI usage. Security teams must integrate AI monitoring into SOC workflows to address data breach risks from shadow AI.

Organisations should also perform structured risk assessments for all AI tools, including Large Language Models used in clinical decision support. These assessments must evaluate model transparency, bias, and vendor security posture.

• Invest in Workforce Education

Training programs strengthen AI governance in healthcare maturity. Clinicians must understand appropriate use cases for AI in clinical decision support environments. Administrators must recognise the financial and legal implications of healthcare AI risks.

Education reduces unauthorised experimentation and supports long-term AI risk management healthcare objectives.

Also Read – Ezovion: Leading Data Security For Healthcare.

Enterprise Controls to Mitigate Shadow AI in Healthcare

Effective control mechanisms translate governance into action.

Data Loss Prevention Systems: DLP solutions can detect and block sensitive data uploads to unauthorised AI platforms, reducing patient data breach risk AI.

Role-Based Access Controls: Identity governance ensures only authorised staff access approved AI tools.

Secure AI Sandboxes: Internal AI environments allow experimentation without exposing sensitive data to external vendors.

Vendor Risk Assessments: Organisations must evaluate third-party AI vendors against healthcare AI compliance standards and incorporate AI-specific clauses into contracts.

Model Risk Management Programs: Structured validation processes ensure Large Language Models used for clinical decision support meet safety benchmarks.

Each control supports broader AI governance framework for hospitals objectives and strengthens enterprise resilience against healthcare AI risks.

From Shadow AI in Healthcare to Structured Innovation

Healthcare organisations cannot eliminate AI adoption. They can, however, structure it responsibly. Leaders who implement robust AI governance in healthcare frameworks reduce data breach risks from shadow AI and protect patient trust.

Forward-thinking health systems treat shadow AI in healthcare as a catalyst for modernisation. They invest in secure platforms, formal oversight, and continuous AI risk management healthcare programs. They align governance with innovation, rather than positioning compliance as a barrier.

The strategic question for boards and executives no longer asks whether AI will enter clinical workflows. AI already shapes clinical decision support, operational analytics, and patient engagement strategies. The real question asks whether organisations will control AI adoption or allow uncontrolled tools to define enterprise risk exposure.

Hospitals that prioritise healthcare AI compliance, mitigate patient data breach risk AI, and implement scalable governance structures will lead the next phase of digital healthcare transformation. Shadow AI represents a challenge. Structured governance transforms it into a competitive advantage.